In the age of big and bigger biomedical data, researchers like May Wang are appropriating a powerful analytics tool from the realm of artificial intelligence (AI) to help. Using deep learning to dig into these cascading cyber-mountains of information, they’re able to open doors to the next generation of precision health care.

“Basically, deep learning tries to imitate the way our brain works,” said Wang, professor in the Wallace H. Coulter Department of Biomedical Engineering at Georgia Tech and Emory University. “We know that this kind of AI has great potential for clinical decision support — for personalized, predictive, and preventive medicine.”

AI systems use algorithms to automatically learn, describe, and improve data, using statistical techniques to spot patterns and then perform actions. Deep learning is a subset of machine learning that goes a bit further, using artificial neural networks inspired by the biology of the human brain. Deep learning AI uses a pattern of logic that mimics how a human might arrive at a conclusion. Only much faster.

“The ultimate, long-term goal of this research would be to provide clinicians with a better tool for predicting the different stages of Alzheimer’s disease,” said Wang, principal investigator of the Biomedical Informatics and Bio-imaging Laboratory (Bio-MIBLab). “We aren’t there yet. But we feel that this work is like an early spark in a larger explosion of research demonstrating the power of deep learning.”

Wang and her colleagues tested the concept and wrote about it recently in Nature Scientific Reports.

Wang’s team used data gathered from the Alzheimer’s Disease Neuroimaging Initiative (ADNI), a multicenter study of 2,000-plus patients (originated by the University of Southern California) that aims to develop clinical, imaging, genetic, and biochemical biomarkers for the early detection and tracking of Alzheimer’s.

Most studies of Alzheimer’s, as well as mild cognitive disorders, use a single mode of data — imaging, for example — to make predictions of what may lie ahead, pathologically, in a patient’s neurological journey.

Wang and her collaborators wanted to know if deep learning could combine multiple kinds, or modalities, of data to offer a fuller picture. It did — their multimodal model outperformed the traditional single-mode model, “significantly improving our prediction accuracy, providing a more holistic view of disease progression,” Wang said.

The team used cross-sectional magnetic resonance imaging (MRI); whole genome sequencing data; and clinical test data, like demographics, neurological exams, cognitive assessments, biomarkers, and medication.

Still, the study was limited to a relatively small number of patients. As Wang explained, all 2,004 patients in the ADNI database had clinical data, but only 503 had imaging data and 808 had genetic data; just 220 patients had all three data modalities.

“That isn’t a large group,” Wang said. “My hope is that this study and others will inspire hospitals and health care organizations to collect multiple modalities of data from the same cohorts of patients so that we can develop a more complete picture of what disease progress is like. We need to test our models on larger, richer data sets.”

It looks as if she will get that opportunity. On the heels of the paper’s publication, three more journals invited her team to write a follow-up paper on the ADNI work, “so I feel like we are moving in the right direction,” Wang said. “This is an important work.”

This research was supported in part by the Petit Institute Faculty Fellow Fund, Carol Ann and David D. Flanagan Faculty Fellow Research Fund, Amazon Faculty Research Fellowship, and the China Scholarship Council (Grant No. 201406010343).

CITATION: Janani Venugopalan, Li Tong, Hamid Reza Hassanzadeh, May Wang, “Multimodal deep learning models for early detection of Alzheimer’s” (Nature Scientific Reports 2021)

Media Contact

Keywords

Latest BME News

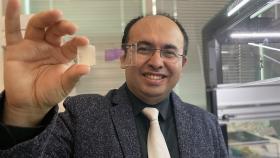

Jo honored for his impact on science and mentorship

The department rises to the top in biomedical engineering programs for undergraduate education.

Commercialization program in Coulter BME announces project teams who will receive support to get their research to market.

Courses in the Wallace H. Coulter Department of Biomedical Engineering are being reformatted to incorporate AI and machine learning so students are prepared for a data-driven biotech sector.

Influenced by her mother's journey in engineering, Sriya Surapaneni hopes to inspire other young women in the field.

Coulter BME Professor Earns Tenure, Eyes Future of Innovation in Health and Medicine

The grant will fund the development of cutting-edge technology that could detect colorectal cancer through a simple breath test

The surgical support device landed Coulter BME its 4th consecutive win for the College of Engineering competition.